The major project of my final year was for my dissertation. I chose to research the effectiveness of dynamic learning algorithms in providing an adaptive, real-time finite state machine in a game environment. After doing a literature review to get up to speed on Bayesian networks, artificial neural networks and genetic algorithms, I chose to focus my research on the latter. I felt genetic algorithms could offer a working solution, and I was interested in programming one and seeing for myself exactly how it would work.

Development

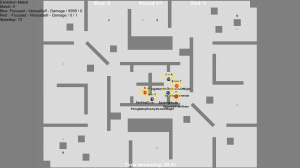

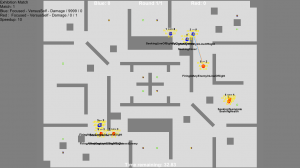

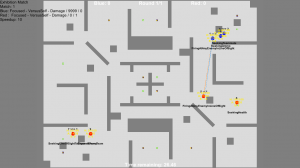

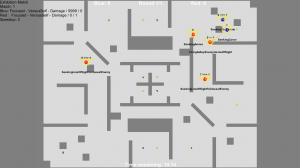

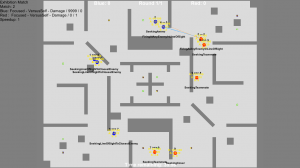

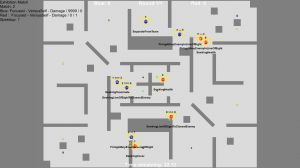

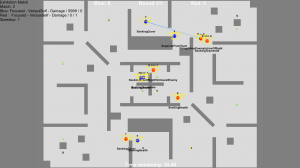

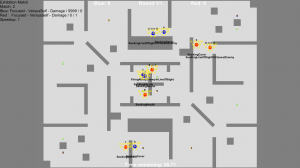

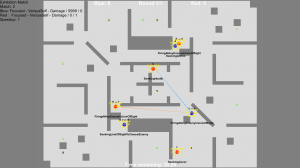

In order to test the AIs’ capacity for learning, they needed a game environment to learn in. I chose to develop my own game for the project, a simple top down shooter I called Tanks. I developed it in Unity, using the then recently released 2D tools. It was my first time using Unity so I had to learn to use the engine as I wrote the game.

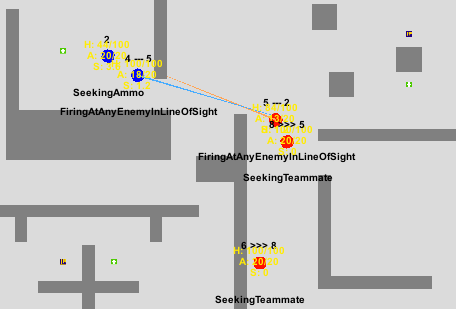

The AI can see the whole map and all the other tanks (AIs) on it, can shoot each other (including teammates), suppress enemies (which reduces their accuracy), and can pick up the ammo and health pick ups dotted about the map. The AI is a finite state machine that holds these seven states:

- Seeking line of sight to the nearest enemy

- Firing at closest enemy in line of sight

- Seeking health

- Seeking ammo

- Seeking cover

- Seeking teammate

- Separating from team

Tanks have a default behaviour of seek and destroy. Other states can be moved into depending on a set of probabilities which depend on the following parameters:

- The tank’s current health

- The tank’s current ammo

- The amount of suppression the tank is under

- The distance to the closest valid cover point

- Whether or not it has line of sight to an enemy

- How close it is to its teammates

These probabilities are the values that are evolved during the genetic algorithm, using replication, mutation and crossover. An algorithm similar to the one I used is described here.

Demos

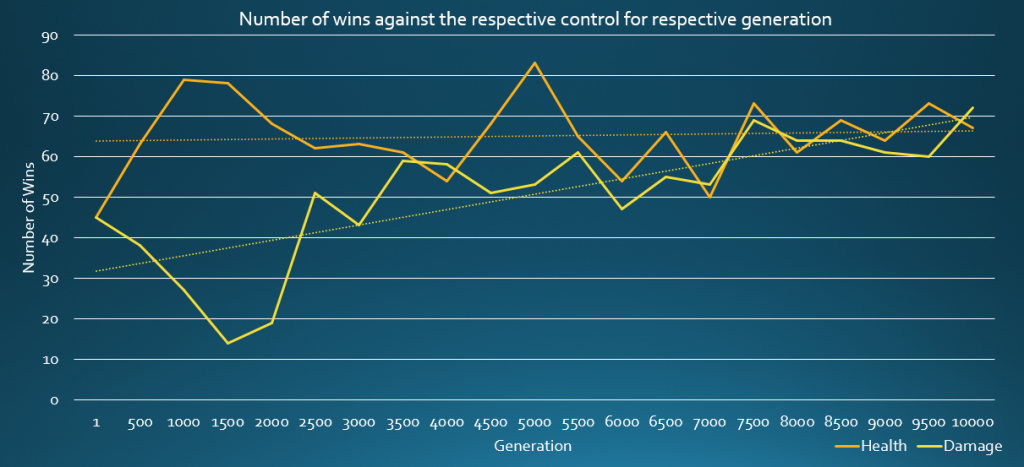

The results were promising and various randomly generated AIs showed signs of improving over time. I tested three different variants on the algorithm, using two different fitness functions: the health remaining for a team at the end, and the damage dealt by the team throughout. The below graph shows results from evolved AI fighting a control AI through its generations, and an upward trend is visible for both fitness functions:

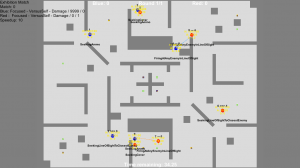

Health

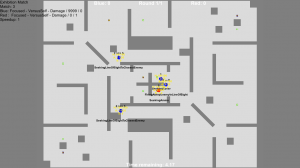

When using the tank’s health as the fitness function, evolved tanks act cautiously, coming out of cover to fire a few potshots at enemies before retreating again. You can observe this behaviour in the following demo, which requires Unity Web Player:

You can use the up and down keys to control the speed at which the simulation runs. The blue team is the evolved AI, and the red team is a randomly generated AI, the same control that was used to evaluate the evolved AIs in the above graph. The evolved team may not win every time, but they win more often than the control, and far more often than the first generation of AIs from which they descend.

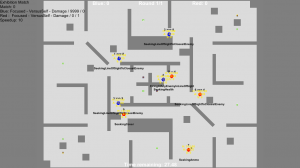

Damage

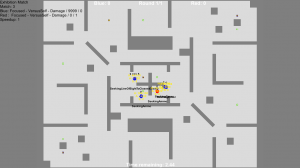

Conversely, when using the damage dealt as the fitness function, evolved tanks are far more aggressive, seeking enemies and only retreating after enduring some retaliation. You can observe this in the below demo:

The controls are the same as the other demo.

Future Work

Although the genetic algorithm showed promise for learning in the AI, the process was very slow. It would take hours for the evolution to take place (as each generated AI had to fight others to determine its effectiveness). I knew, going into the project, that this would likely be its downfall. The AIs on display here are relatively young, and more evolved AIs may show as yet more sophisticated behaviour, however I simply did not have time to find out when gathering data for my dissertation. In the future, I would be interested in running the algorithm for a very extended period of time, for example 100,000 generations (as opposed to the above AIs which are from the 5000th and 10,000th generations respectively), to see how far the effectiveness of the AI could be improved.